Build Your AI Governance Framework: A 3-Page Blueprint

Okay so we got a cease-and-desist this week because a hiring manager used ChatGPT to write a job description and it plagiarized a competitor's proprietary language. Word for word. On LinkedIn. For 48 hours.

Our AI policy? 247 words from 2024 that basically said "use your judgment." Cool. Very helpful.

So I built the thing I wish I'd had before this happened: a 3-page framework with the 7 questions every AI policy needs to answer. Three layers, seven questions, each with the tension you'll have to navigate. This is not a document that gives you all the answers, but it does help you ask the right questions.

Short on time? Download just the framework here.

Here's the full story.

__________________________________________________________________________________

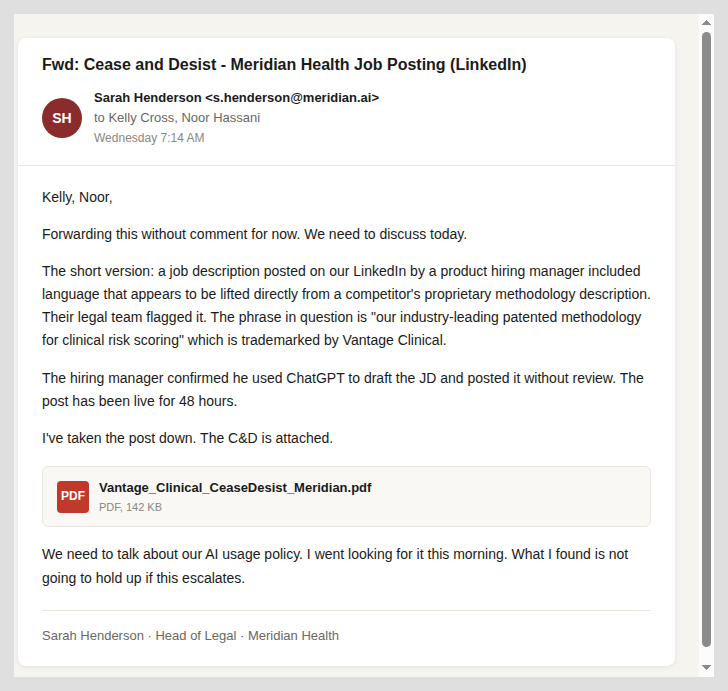

The email arrived at 7:14am on a Wednesday. It was from our Head of Legal, forwarded without comment. Attached: a cease-and-desist letter from a competitor's legal team.

Here's what happened. A hiring manager on our product team used ChatGPT to draft a job description for a new role. The AI pulled from its training data and produced language that closely mirrored a competitor's JD, including the phrase "our industry-leading patented methodology for clinical risk scoring." That phrase belongs to another company. It describes their proprietary process. It ended up on our LinkedIn job post, word for word.

The competitor's legal team found it within 48 hours. The cease-and-desist accused Meridian of misrepresenting proprietary methods as our own.

The hiring manager's defense was simple: "I didn't write it. The AI did. I just posted it."

__________________________________________________________________________________

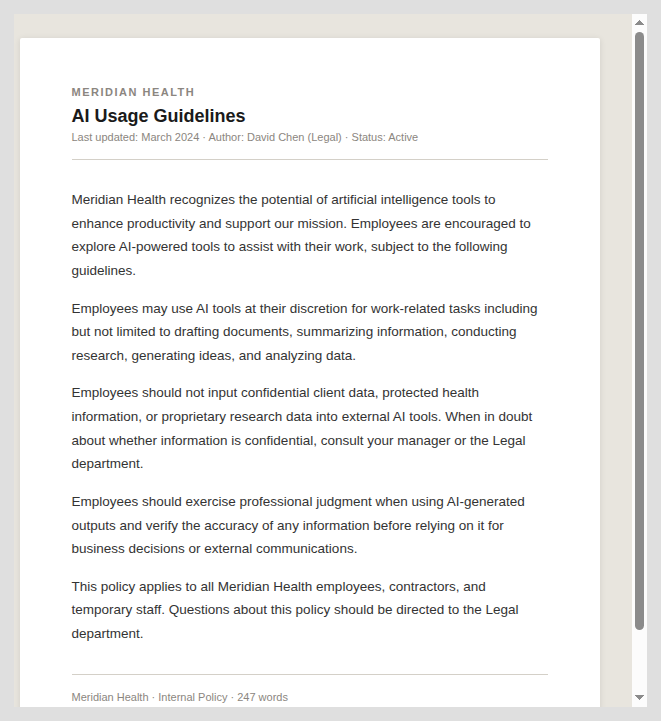

I went looking for Meridian's AI policy. Found it in a shared Drive folder. 247 words, written in 2024 by someone in Legal who has since left the company.

The entire thing boiled down to: use AI at your discretion, don't input confidential client data, exercise professional judgment. No approved tool list. No review requirements for external-facing content. No accountability framework for when AI output causes harm.

247 words does not constitute a policy.

__________________________________________________________________________________

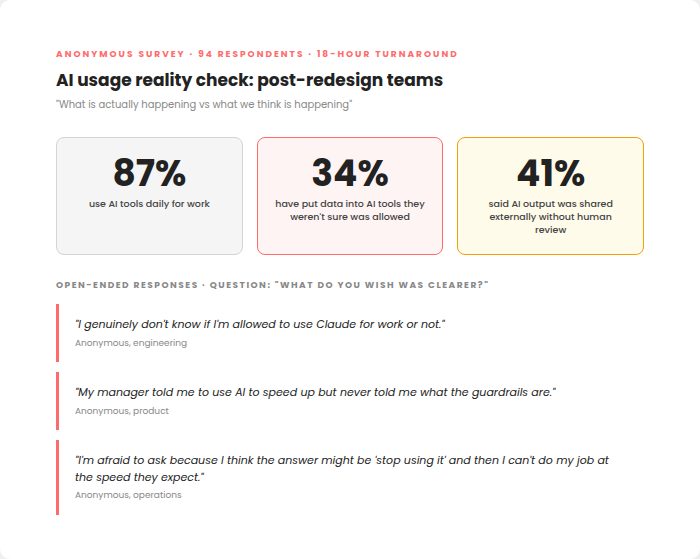

I sent a five-question anonymous survey to every employee who'd been through the unbundled org redesign. 94 people. Results came back in 18 hours.

87% used AI tools daily. 34% had put data into AI tools they weren't sure was allowed. 41% said AI output had been shared externally or used in a decision without human review.

"I genuinely don't know if I'm allowed to use Claude for work or not."

"My manager told me to use AI to speed up but never told me what the guardrails are."

"I'm afraid to ask because I think the answer might be 'stop using it' and then I can't do my job at the speed they expect."

The company expects AI-level speed but hasn't given permission or guardrails for AI-level tools. So people use them quietly and hope nothing breaks.

__________________________________________________________________________________

Thursday morning. Noor's office.

I brought the survey data, the cease-and-desist, and the 247-word policy.

"This isn't a policy," Noor said after fifteen seconds.

"No. It's not. But we don't need a 30-page document. We need to answer seven questions."

I walked her through three layers.

Layer 1: The Foundation. Four structural questions every company has to answer. What tools are approved, and how fast can new ones get approved? What data is off-limits, and how does an employee know in the moment? Where does AI output need human review, and what does "review" actually mean at each risk level? Who is accountable when AI output causes harm?

Each question has a tension. If every tool needs IT approval, velocity drops and people go around the process. If you approve everything, you lose visibility. The policy has to find the line for your company.

Layer 2: The Sensitive Zone. One question, the most important one. When can AI be involved in decisions about people's careers? Hiring, firing, promoting, evaluating. Several states already have specific laws. "Human in the loop" sounds like an answer until you define what it requires in practice.

Layer 3: The Human Layer. Two questions most governance frameworks skip entirely. How do you roll out this policy without triggering a fear response? And how do you keep it alive when AI changes faster than your review cycle?

Noor looked at the framework for a long time.

"How long to build this?"

"Legal, IT, and me in a room for two days. A week to draft. A week to review. A rollout plan that doesn't make people panic."

"You have three weeks. And Kelly, I want the hiring manager who posted that JD involved in the rollout. Not as punishment. Because he needs to understand why this matters, and the rest of the company needs to see that we're fixing the system, not blaming the person."

__________________________________________________________________________________

The full framework is ready. Three layers, seven questions, each with the tension you'll have to navigate and the follow-through questions your legal and IT teams need to answer. It fits on one page.

Download the AI Governance Blueprint here.

Take it to your next meeting with Legal and IT. Put it on the table. If your policy doesn't address all seven, you're exposed in ways you probably haven't mapped yet.

.svg)

.svg)

.svg)